The Perils of Personality

This is another installment in an ongoing meditation on Matt Jones' admonition that robots should BASAAP.

photo credit: Andreas Kristensson

photo credit: Andreas Kristensson

It was my term for a bunch of things that encompass some 3rd rail issues for UI designers like proactive personalisation and interaction, examined in the work of Byron and Nass, exemplified by (and forever-after-vilified-as) Microsoft's Bob and Clippy (RIP). A bunch of things about bots and daemons, conversational interface.And lately, a bunch of things about machine learning – and for want of a better term, consumer-grade artificial intelligence.BASAAP is my way of thinking about avoiding the 'uncanny valley' in such things.Making smart things that don't try to be too smart and fail, and indeed, by design, make endearing failures in their attempts to learn and improve. Like puppies.

Matt Jones B.A.S.A.A.P. BERGTwo things transitted my node ("crossed my desk" is so antique, don't you think?) this week that served to illustrate how BASAAP could fail to avoid the 3rd rail.First, this line from a review of the Mint Cleaning Robot posted on Amazon.

The personality of the bot is OK. It's more like a clinical, efficient nurse doing its job. It isn't quite as chipper as other cleaning bots but it gets the job done.

Ryan Mckenney Fantastic cleaning robot! customer review on Amazon.comThe Mint is a self-driving swiffer. It's the least personable thing and yet it has a personality. Of course if has a personality. Everything has a personality. A broom has a personality, one supposes. With the robot, you end up in situations where a perfectly functional machine loses marks because it rubs the user the wrong way.

photo credit: ramseymohsenAnd when a BASAAP machine rubs users the wrong way, it can rub them in the really wrong way. Consider Siri's unfortunate inability to offer information about abortion, birth control, help after rape and help with domestic violence.As Danny Sullivan notes, "Welcome to search scandals, Apple." Omissions or failures of the database reflect on the company providing the database, even though they aren't providing the content. Google learned this the hard way with the results for "Jew" (as opposed to "jewish", or "Judaism"). Apple is learning it through women's issues.Siri is a BASAAP machine. When Apple launched the service, I wrote about this for The Atlantic, arguing that her robotic voice and clever responses to confuding input would help her win her heart. At the time, people were enamoured with this aspect of her, cataloguing all the funny responses she gave.This is all well and good if she's making jokes about the meaning of life. It's much, much less good if she's covering up a bad search result with a snarky aside when the search result is about a rape crisis. Her charming personality stops being charming the minute she starts making inappropriate jokes.

photo credit: ramseymohsenAnd when a BASAAP machine rubs users the wrong way, it can rub them in the really wrong way. Consider Siri's unfortunate inability to offer information about abortion, birth control, help after rape and help with domestic violence.As Danny Sullivan notes, "Welcome to search scandals, Apple." Omissions or failures of the database reflect on the company providing the database, even though they aren't providing the content. Google learned this the hard way with the results for "Jew" (as opposed to "jewish", or "Judaism"). Apple is learning it through women's issues.Siri is a BASAAP machine. When Apple launched the service, I wrote about this for The Atlantic, arguing that her robotic voice and clever responses to confuding input would help her win her heart. At the time, people were enamoured with this aspect of her, cataloguing all the funny responses she gave.This is all well and good if she's making jokes about the meaning of life. It's much, much less good if she's covering up a bad search result with a snarky aside when the search result is about a rape crisis. Her charming personality stops being charming the minute she starts making inappropriate jokes.

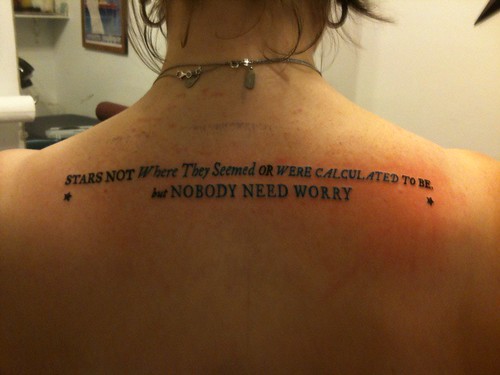

photo credit: timoniThere's your BASAAP 3rd rail right there.

photo credit: timoniThere's your BASAAP 3rd rail right there.